Advantages and risks of Software Defined Storages (SDS)

In recent years, companies have had to contend with a large increase in data volumes, and this trend will continue in the years to come. With each approaching year, there is more and more data collection and storage. According to a study conducted by EMC, our digital universe is growing 40% a year into the next decade. Just think about how much data you collect and store today versus five years ago, and all the corporate regulations that are in place for the mandating of terms for data retention. The question is: What should we do with all the data?

Until now, IT departments have dealt with the burden of BIG data by purchasing new storage systems as current systems reach capacity. This traditional extension of the hardware can be costly, inefficient, and the increasingly complex architecture tends to create bottlenecks which slow the system.

the Solution

The concept of Software Defined Storage (SDS) promises to be a solution to the problem of rising amounts of data. As the name suggests, the management of the entire storage landscape, stems from a single management software platform. Unlike the use of SAN, NAS or RAID systems, SDS systems are from controllers that have designs only for products of a particular manufacturer, allowing the system to be free of hardware restraints. This makes it theoretically possible to combine the software and hardware from different manufacturers to operate together, resulting in an enormous improvement in performance. Some manufacturers advertise with up to five times faster speed compared to hardware-based systems.

Implementation can be a challenge, but in many cases the systems' structuring are in a way such that all existing storage is easily expandable by additional drives or devices. There are even service partners and vendors that offer special services for

those special cases where implementation does not go as smoothly as expected. .

Basically, a modern SDS system works like this (this can vary with how the system is built): installation of the appropriate SDS software is put on the server and/or client computers in use. This software provides all the necessary functions to connect over a network with every connected storage media. For example, two servers are running with the SDS software in an SDS system and using a connection to three SAN storages. Ultimately, in this composite, every SDS server can communicate with each Storage and change data. With the possible SDS configurations there are almost no limits imaginable, and system admins can configure free space within minutes and distribute storage utilization across multiple disks and storage platforms.

And with this comes risks...

The advantages of software-based storage are obvious: The possibility of better connectivity of existing hardware and a joint management of the connected storage system reduced costs and improved performance. It’s no wonder that Software Defined Everything (SDE) is becoming the latest trend. In the future, the entire IT hardware will be manageable and controllable by software. Costly, proprietary, hardware-based solutions with manufacturer-dependent controllers, switches, memories or even CPUs may be a thing of the past.

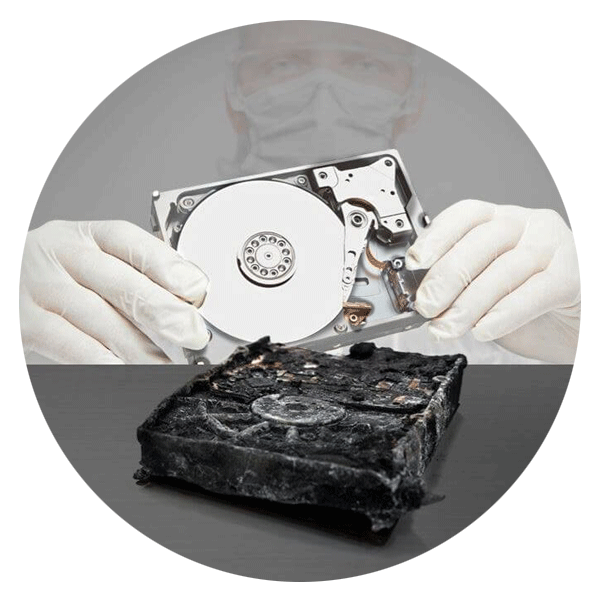

One problem of the increasing use of software defined storage is, however, overlooked or ignored: The new data structures of the SDS software solutions lead to additional problems in failures or defects. Whether physical disk failures due to wear or loss of data due to human error: As the use of SDS systems will add complexity for both the user and for professional data recovery. If one imagines that within the SDS software layer, with its individual data structure, there is also the virtual layer. Then inside that virtual layer there is the actual data (or possibly databases). As you can see, the extent of the problem becomes clear. In addition, a variety of different SDS data structures have been introduced, which as yet, have no uniform standard.

Consider this: a few basic tips for the use of SDS

Implement a sophisticated data protection strategy

When using or setting up an SDS system, it is particularly important to use a sophisticated data protection strategy that includes Data Recovery.

Really implement your backup plan, too

It is especially important, in regard to these systems, to develop a solid backup plan and to make sure the plan has proper implementation.

Backup system settings, too!

It makes sense to create in addition to the normal backups also a backup of the current system settings so that both the company and a data recovery specialist have it for future reference, if it comes to a complex system failure.

Test your backup

Often the backups in companies are created more or less automatically, but never really checked whether these really work. Therefore, you should check your backups on functionality again and again.

What could sound partly as beginner’s tips has a serious background: At Ontrack data recovery projects are coming into the laboratory with no functioning backups available. But it is precisely in such complex storage systems with many integrated technologies and file structure layers, one should not neglect these relatively simple precautions.

Conclusion

Software Defined Storage is the logical evolution of storage systems, and one thing is already certain: Their use will increase in the future. Without SDS even more sophisticated and modern concepts such as Hyper Converted Storages or even dynamic tiering of storages cannot work. Thus, it is no wonder that, according to the IDC white paper from November 2014,"Software Defined storage - IT infrastructure for the next-generation company", 16 percent of all surveyed companies have already invested in software-defined storage technologies and another 35 percent evaluate the future use.

Nevertheless, one must be clear about the fact that in these complex storage systems, the requirements for the IT administration significantly increases with regard to the data and system security. Sophisticated data recovery and disaster recovery strategies and regular backups are a must here. If despite all the preparation is a failure to mourn loss of data should, however, be better contact because of the complexity of the data structures to a data recovery service provider who can demonstrate systems having sufficient expertise in the recovery of SDS.

Call for Immediate Assistance!

- Crypto Currency (2)

- Data Backup (9)

- Data Erasure (8)

- Data Loss (15)

- Data Protection (11)

- Data Recovery (22)

- Data Recovery Software (2)

- Data Security (3)

- Data Storage (15)

- Degaussing (1)

- Deleted Data (5)

- Digital Photo (2)

- Disaster Recovery (6)

- Encryption (1)

- Expert Articles (5)

- Hard Drive (7)

- Laptop/Desktop (7)

- Memory Card (5)

- Mobile Device (13)

- Ontrack PowerControls (1)

- Raid (5)

- Ransomware & Cyber Incident Response (6)

- Server (6)

- SSD (14)

- Tape (17)

- Virtual Environment (9)