Friday, July 21, 2023 by Ontrack Team

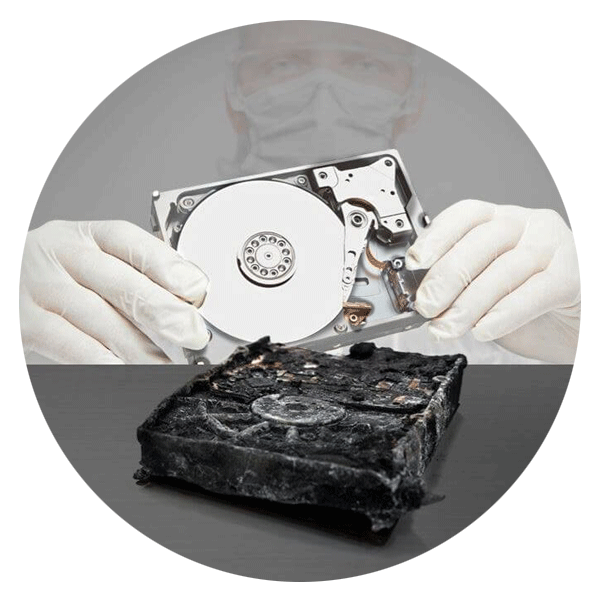

In this article, read about a family who reached out to Ontrack for help recovering photos of lost loved ones from a burned laptop after a terrible tragedy.

Monday, September 19, 2022 by Tim Black

In this article, we investigate what may be causing your hard drive clicking sound and provide some practical fixes you can try yourself.

Friday, September 9, 2022 by Tim Black

A ransomware attack is one of the biggest threats facing online users. In this article, we explore what happens during a ransomware attack, and the steps you need to take to secure your organization in the aftermath.

Thursday, September 8, 2022 by Shaun Stockman

In this article, we share some practical advice on how to troubleshoot and fix your Mackbook Air or Macbook Pro when it’s refusing to turn on, and how to recover any data you fear may be lost.

Monday, August 29, 2022 by Tim Black

Data Recovery Disaster recovery plans. Critical for every organization. In this blog, we detail cyberattacks, ransomware, natural disasters, and much more.

Wednesday, August 24, 2022 by Tom Nevin

Ontrack discusses the Blue Screen of Death (BSOD). Since 1987 and with over 2,700 reviews in Trustpilot!

Monday, July 18, 2022 by Michael Nuncic

Hardly a day goes by without a corporate IT system or a privately owned computer being infected by ransomware. Every time the result is same: the victims are blackmailed with high monetary demands. The problem is so acute that reputable news media reports have intensified in recent weeks.

Tuesday, June 28, 2022 by Tilly Holland

Data Recovery Disaster recovery plans. Critical for every organization. In this blog, we detail cyberattacks, ransomware, natural disasters, and much more.

Wednesday, June 1, 2022 by Stuart Burrows

Our tape services team provides clients with peace of mind when accessing legacy data on tapes and virtual backup environments. Get in touch to discuss how Ontrack can help get your legacy data under control.

Saturday, May 28, 2022 by Tom Nevin

Are you looking to wipe data from your old phone? Well, look no further than Ontrack as we can do exactly this for you. Give us a call on 952.562.2003.

Call for Immediate Assistance!

- Crypto Currency (2)

- Data Backup (9)

- Data Erasure (8)

- Data Loss (15)

- Data Protection (11)

- Data Recovery (22)

- Data Recovery Software (2)

- Data Security (3)

- Data Storage (15)

- Degaussing (1)

- Deleted Data (5)

- Digital Photo (2)

- Disaster Recovery (6)

- Encryption (1)

- Expert Articles (5)

- Hard Drive (7)

- Laptop/Desktop (7)

- Memory Card (5)

- Mobile Device (13)

- Ontrack PowerControls (1)

- Raid (5)

- Ransomware & Cyber Incident Response (6)

- Server (6)

- SSD (14)

- Tape (17)

- Virtual Environment (9)