Understanding Microsoft's Resilient File Systems (ReFS)

The file system of a computer is the key to rendering our files in such a way that they can be easily stored and easily found. It can also be the key to unlocking the whereabouts of lost data. This week, I decided to take a look at the Resilient File System (ReFS to determines its advantages and disadvantages and where it should be used.

The first ever file system

The earliest file system on record is the Electronic Recording Machine Accounting (ERMA) Mark 1, a hierarchical file system that was presented in 1958 at the Eastern Joint Computer Conference. In their paper “Organization and Retrieval of Records Generated in a Large-Scale Engineering Project”, Barnard and Fein describe how the idea and structure came about and the situation that they were trying to improve with this file system. Basically, this file system sought to reduce the inefficiencies and errors that occurred from the lack of an organised system.

The idea behind the system was to provide more and accurate information in a faster and more efficient manner. Needless to say, in the age of big data, the development of the file system has come a long way since 1958.

What is a ReFs file system?

Resilient File System (ReFS) was developed by Microsoft for use in Windows Server 2012. It’s predecessor, New Technology File System (NTFS) has been the standard since the early 90s (technically since Windows NT 3.51 on servers, and since Win 2000 on desktops) and is still in use today. ReFS is still an optional file system that the customer has to choose to use, but Microsoft plans to replace NTFS with ReFS as the default file system in future Windows releases.

ReFS was designed for use in systems with large data sets, thereby providing efficient scalability and availability in comparison with NTFS. Data integrity was one of the main new features added, allowing for business critical data to be protected from commons errors that can cause data loss. If a system error occurs, ReFS can recover from the error without risk of data loss and also without affecting the volume availability. Media degradation is also another issue that was addressed to prevent data loss when a disk wears out.

ReFS file system features

Allocate on Write

The key reasons for using ReFS can be dependent on how much data your organisation manages. This type of file system is normally used for very large data sets due to it’s differences with NTFS. For example, data corruption can be avoided using Allocate on Write, which allows for thin provision clones of a source database from multiple points of time at the same time, without the need for additional storage consumption. Using this, corruption from in-place errors is eliminated.

Comparatively, NTFS uses a transaction journal to maintain consistency on the disk, which updates the metadata in-place. The journal can be used in the event of a data loss to backtrack to the error and perform a recovery. However, when updating the disk, the metadata can become corrupted if there is a power loss; this is known as a torn write. A torn write can be described as an instance when only part of the block is written, therefore some sectors are lost.

ReFS seeks to eliminate any torn writes by using the Allocate on Write method, which does not update metadata in-place, but rather writes it using an atomic operation. This means that the file is written and read in a single instruction. The check-summed system verifies that all the data, which is written and stored, is accurate and reliable, i.e. it checks for disk corruption. This is used to detect if the data on the disk has changed since it was last written.

B+ trees

The capacity of ReFS allows for large scalability. As can be seen in the table below, there are no limits on file and folder size. Obviously, this would mean that it is quite advantageous when working with large data sets.

| Attribute | Limit based on the on-disk format |

| Maximum size of a single file | 2^64-1 bytes |

| Maximum size of a single volume | Format supports 2^78 bytes with 16KB cluster size (2^64 * 16 * 2^10). Windows stack addressing allows 2^64 bytes |

| Maximum number of files in a directory | 2^64 |

| Maximum number of directories in a volume | 2^64 |

| Maximum file name length | 32K 255 unicode characters (for compatibility this was made consistent with NTFS for the RTM product) |

| Maximum path length | 32K |

| Maximum size of any storage pool | 4 PB |

| Maximum number of storage pools in a system | No limit |

| Maximum number of spaces in a storage pool | No limit |

Source: Microsoft Blog

This file system uses an on-disk structure to store the files using a B+ tree. A B+tree is a structure for storing and retrieving data, whereby the data is stored in a tree structure and each node of the trees contains an ordered list of keys or pointers to the lower level nodes in the tree. The tree provides a fixed number of items in a node (blocks).

Source: B+ Tree index structures in InnoDB

The advantage of using B+ trees is in the way the tree stores the records or “satellite information”; these are stored at the leaf level. Keys and child pointers are stored in interior or ‘non-leaf’ nodes. By storing records at the ‘leaf’ level of the tree, it maximises the branching power of the internal nodes. A high branching factor allows for a lower tree, meaningless disk I/O, thereby meaning better performance.

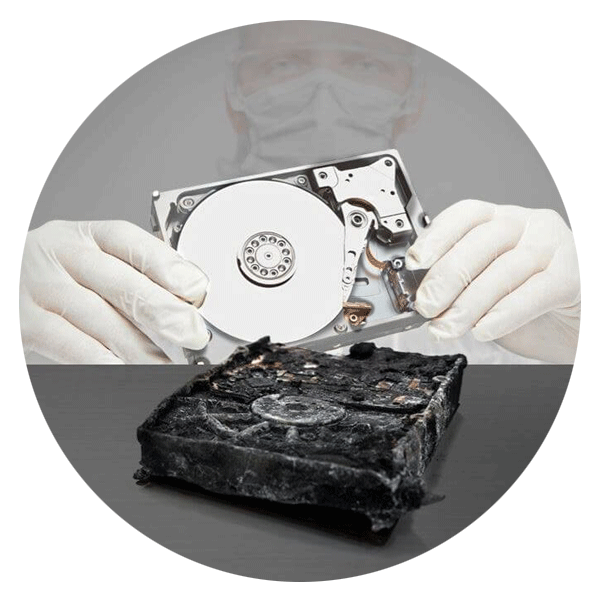

What does this mean for data recovery?

ReFS structure works like a database so it is completely different from an NTFS recovery that uses a flat table of metadata. To find data, we have to traverse ReFS like a database, opening tables which contain another set of tables, etc.

The database structure makes the file system more complicated than NTFS, but it also has some advantages, such as a more spread out file system structure which can help with recovering from some types of damage.

Since ReFS is a “copy on write” file system, there are many copies of the data that can be used for recovery if the primary copy is damaged. However, bear in mind that since ReFS is a database, an engineer requires special tools and training to decode a file’s metadata.