Monday, September 19, 2022 by Tim Black

In this article, we investigate what may be causing your hard drive clicking sound and provide some practical fixes you can try yourself.

Thursday, July 1, 2021 by Tilly Holland

Ontrack discusses what to consider when purchasing a new hard drive. Ontrack Since 1987 and with over 2,700 reviews in Trustpilot!

Wednesday, January 9, 2019 by Michael Nuncic

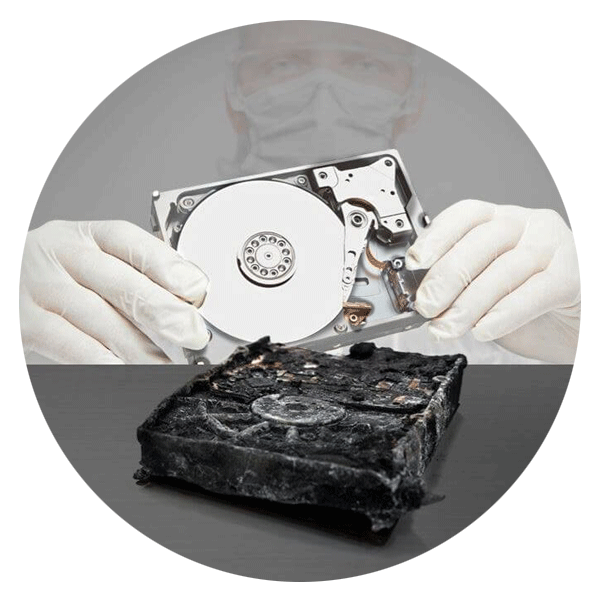

The global leader in data recovery since 1987. We perform HDD and SSD data recovery from any make, model or file system. Start recovering your data today!

Tuesday, May 26, 2015 by Jennifer Duits

The metrics tracked by SMART tools - called attributes - vary from manufacturer to manufacturer, but typical examples include the number of hours the drive has been switched on, the time it takes for the spindle to reach operational speed and the count of reallocated sectors.

Monday, January 14, 2013 by David Logue

This post is a continuation of the series on Solid State Drives (SSDs) and their role in enterprise storage. In the first post, I discussed the differences between traditional HDDs and SSDs. In the second post, I looked at the challenges associated with data destruction and asset disposal.

Tuesday, December 18, 2012 by David Logue

Why care about data destruction and asset disposal? According to the US Department of Commerce, data security breaches cost US companies more than $250 billion per year! A few examples will help illustrate the importance of proper data erasure and asset disposal practices.

Call for Immediate Assistance!

- Crypto Currency (2)

- Data Backup (9)

- Data Erasure (8)

- Data Loss (15)

- Data Protection (11)

- Data Recovery (22)

- Data Recovery Software (2)

- Data Security (3)

- Data Storage (15)

- Degaussing (1)

- Deleted Data (5)

- Digital Photo (2)

- Disaster Recovery (6)

- Encryption (1)

- Expert Articles (5)

- Hard Drive (7)

- Laptop/Desktop (7)

- Memory Card (5)

- Mobile Device (13)

- Ontrack PowerControls (1)

- Raid (5)

- Ransomware & Cyber Incident Response (6)

- Server (6)

- SSD (14)

- Tape (17)

- Virtual Environment (9)