Ontrack |Write Amplification | SSD | Ontrack blog

What is Write Amplification (WA) and how does it effect SSDs?

In a recent blog, we described Garbage Collection and the Trim command. Both are methods to mark and set data that are no longer in use and can, therefore, be overwritten in a Flash NAND based SSD.

These two methods try to solve the problem that any SSD made by Flash NAND chips have - the Write Amplification (WA) and the Write Amplification Factor (WAF).

As we have mentioned before, one of the main differences between a ‘normal’ magnetic hard disk drive (HDD) and a Solid State Drives (SSD) is how they handle data writes. While HDD’s write data on empty spaces, the SSD always erases data first before it writes new data inside the Flash storage chips. This means that except for brand new SSDS or ones that have been securely erased by the producer before its sold, the Flash storage chips have to be erased before they can be rewritten.

This would not be a problem if the deletion process was an easy task. However, this is not the case as when deleting data and writing new data on an SSD, it requires the data and metadata to be written multiple times. This is because Flash storage consists of data blocks and pages.

Blocks are made out of several pages and one page is made out of several storage chips. The main challenge is that the Flash cells can only be deleted block-wise and written on page-wise. To write new data on a page, it must be physically totally empty. If it is not, then the content of the page has to be deleted. However, it is not possible to erase a single page, but only all pages that are part of one block. Because the block sizes of an SSD are fixed – for example, 512kb, 1024kb up to 4MB. – a block that only contains a page with only 4k of data, will take the full storage space of 512kb anyway.

And this is not all when any data in the SSD is changed, the corresponding block must first be marked for deletion in preparation of writing the new data. Then the read/modify/write algorithm in the SSD controller will determine the block to be written to, retrieve any data already in it, mark the block for deletion, redistribute the old data, then lay down the new data in the old block.

Retrieving and redistributing the new data means that the old data will be copied to a new location and other complex metadata coping and calculations will also add to the total amount of data.

The result is that by simply deleting data from an SSD, more data is being created than being destroyed. Since Flash NAND chips are only good for a certain amount of read-/write cycles, Write Amplification (WA) results into lower life expectancy, endurance, and speed.

The Write Amplification Factor (WAF)

As described above consumer SSDs used to have high WAF because when you write a new 4kb file, for example, the Solid State Drive may write 40 kb of data on average. This is because the SSD controller tries to combine data from several partially used blocks to free up pages for new data to be written. In this case, the Write Amplification Factor is 10. If, for example, 2 GB of data sent from the host computer to the SSD and 4 GB was written on the SSD, the WAF is 2.

Here is a nice explanation from our partner Kingston Technology on how the WAF is calculated.

How to combat Write Amplification?

You can fight the effects of write amplification by keeping free space consolidated on the SSD. You can actually minimise Write Amplification when the TRIM command is enabled and the TRIM operations are done automatically by the operating system in the background to wipe clean unused disk space. However – and this comes at a major risk – once TRIM is active and the storage space is overwritten there is not a chance to recover the original data once saved there.

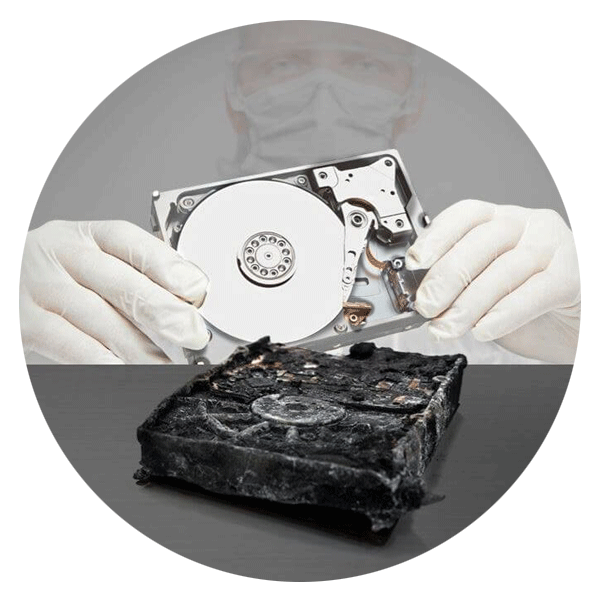

Nevertheless, when it comes to important data, you should not give up immediately, but place the case in the hands of data recovery specialists. In some cases, they can recover data that has "hidden" in other locations, for example, or where the TRIM

command was not executed correctly.

Call for Immediate Assistance!

- Crypto Currency (2)

- Data Backup (9)

- Data Erasure (8)

- Data Loss (15)

- Data Protection (11)

- Data Recovery (22)

- Data Recovery Software (2)

- Data Security (3)

- Data Storage (15)

- Degaussing (1)

- Deleted Data (5)

- Digital Photo (2)

- Disaster Recovery (6)

- Encryption (1)

- Expert Articles (5)

- Hard Drive (7)

- Laptop/Desktop (7)

- Memory Card (5)

- Mobile Device (13)

- Ontrack PowerControls (1)

- Raid (5)

- Ransomware & Cyber Incident Response (6)

- Server (6)

- SSD (14)

- Tape (17)

- Virtual Environment (9)